User Interaction Revolution

How AI is reshaping product design

In this post we are going to take a quick look at user interfaces, user interactions and what the advencements of Artificial Intelligence may mean to the future of how humans interact with computers and digital systems.

Table of contents

- An introduction to Human Machine Interfaces

- A brief history of the evolution of HMI

- How AI is transforming HMI

- A closer look into the future

An introduction to Human Machine Interfaces

Human machine interfaces (HMI for short) are defined as how systems (digital or computadorized) allow for humans (people like me and you) to interact with them. Most commonly these interactions happen through the usage of web or native applications running in computers, smartphones, tablets and so on. These applications may include dashboards, forms, usage of pointer, touch or keyboards.

HMIs are an essential part of today’s digital world, we are constantly relying on these interfaces in order to accomplish tasks such as finding a ride, ordering food, reading a book, accessing bank accounts, monitoring network traffic and much more. It is very difficult to picture a world where we have no computers what so ever and we purely rely on human to human interactions but, how did we get here?

A brief history of the evolution of HMI

Early in the days of computers, most of its usage was applications related to factory work. Those would be considered the first use cases of robotics where a machine, operated by a human by push of buttons, would execute tasks only possible by humans before.

Then computers evolved, terminals were all around with their keyboards. People moved from the writting machine to these weird plastic white(ish) boxes with a bunch of “micro” components on them. Don’t get me wrong, for the time, you could do a lot of things with it. People were highly invested into programming and creating games where you, as a player, could interact with a story by providing your own words as input to situational questions. Fun fact, programming characters on screens became so popular that we have programs like cowsay that generates “pictures” (based on ASCII characters) of a cow saying something.

Then, something happened, the mouse was invented. Around the 1980’s a device that would translate actual hand input to selections on a computer screen changed, again, how we were used to interact with computers. The mouse is one of the great revolutions for HMI, where a whole new dimension to interacting with computers was introduced. Then again, not for too long the industry would be taken by storm with yet another break through in user interaction: the multi touch.

Touch sensitive and capacitive displays were starting to get hot in the market and the idea was simple: What if instead of using the mouse a human could actually point to the things in a computer screen and actually select them by clicking with their fingers?

Not long after the touch tech was being worked on Apple famously released the iPhone, a paradigm shift device that would forever change what a computer means. Today, our phones (not even call smartphones anymore) are as powerfull as super computers once were. If you look at the timeline of events from the invention of the mouse to the invention of touch technologies, it would be around 20 years, give or take. Well, we are now around 20 years since the multi-touch was released onto the world and we are surrounded by phones, tablets and even computers that can be operated by actual hands, not through some instructions from a keyboard onto a terminal.

If you think that would be the end, and since we are able to touch the screens we are done with how to interact with computers, you would be wrong. Here comes a whole wave of Virtual Reality, Augmented Reality and all sorts of merges between digital and real world experiences. Images in 2D, 3D, in theaters, at home. Screens of all sizes and now even a giant sphere that surrounds you with giant, high quality, high density, high fidelity screens (from the outside too).

Now we got to the end right? Spoiler alert: No.

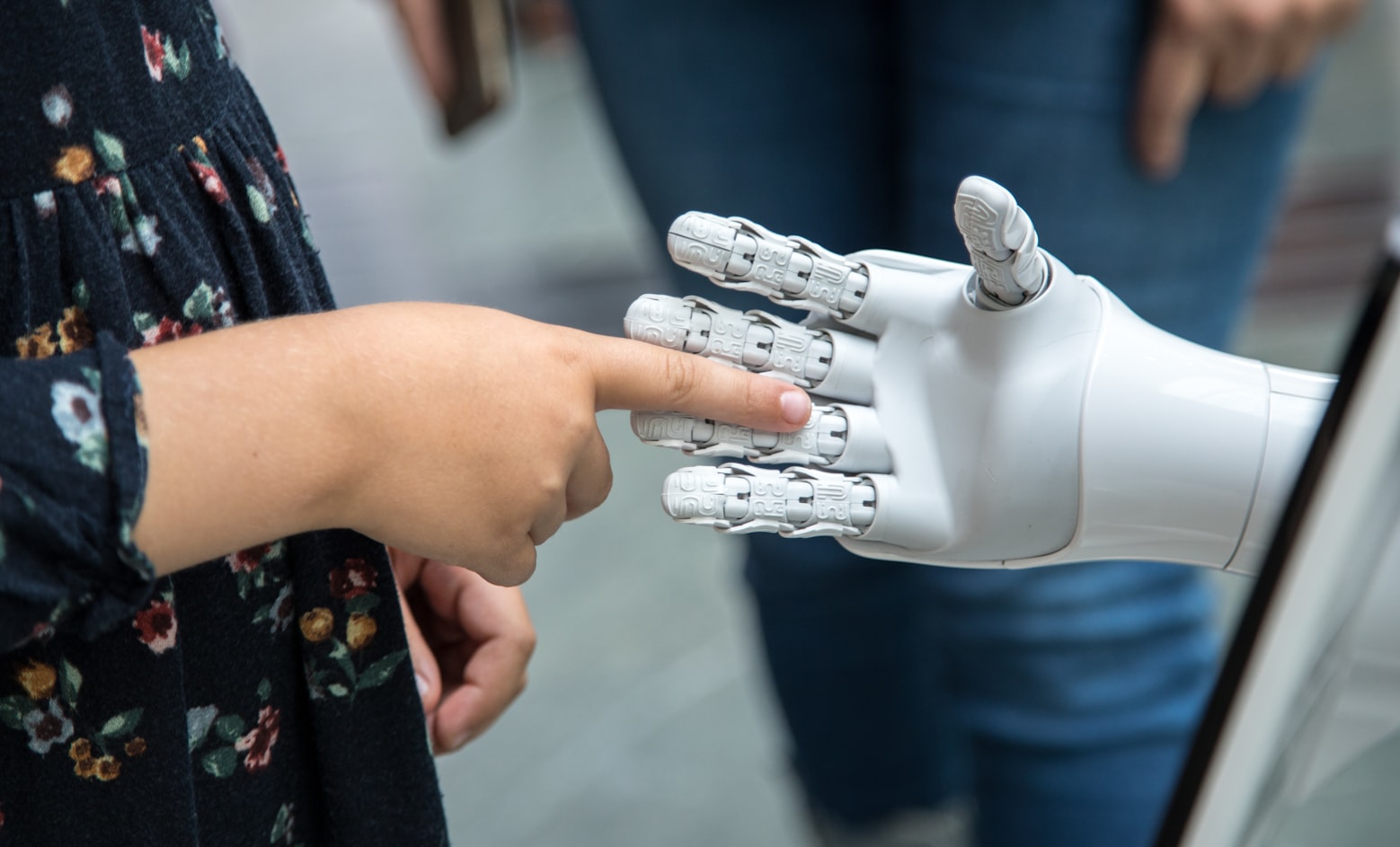

How AI is transforming HMI

ChatGPT was released in 2020 and with it a new wave of how to communicate with the machine, really communicate. It used to be that to get information from a system you would need to learn how that system operates, find the best and more performant ways to create a query on a search engine with some weird keys from your keyboard to find results on the web. Now you can use natural language to do it. ChatGPT not only implements under the hood a highly advanced model for language processing (the infamous LLMs) but that system is also based on natural language processing (NLP) meaning you can ask for things using plain english and the intelligent mechanism would understand and process your request. That is a breakthrough in terms of how users fetch information from the web. Google, once the king of web search, is now seeing its reign diminish over an unexpected challenger. That is even worse for Google if you consider that all the advances we are seeing on LLMs and AI stem from a paper authored by Google scientists named “Attention is all you need”, from 2017, but that is a topic for another day.

Here we are, 2025 and users can literally say what they want and the AI will find it, from plain english to plain english (or your local language). That’s where we are but where are we going?

A closer look into the future

One of the most understimated tech I have seen in the past couple of years is actually from Apple. Did you know you could operate your phone with the movements of your eyes? That is very significant because it hints at a not so distant reality where screens are no longer necessary and the digital world can be seen and talked to directly, becoming an even more human experience than touch input.

Apple has also heavily invested into their Augmented Reality glasses, Vision Pro, which merges the usage of digital systems and the real world surrounding us for a mixed reality experience.

Meta has done incredible developments on AI Glasses and, most recently, introduced Meta Displays, which blend even further the digital experience into the real world.

Now, combine mixed reality with the capabilities of tracking your eye movement, your speech, your nervous system pulses (like the new Meta Displays do) and, by processing those stimulus as inputs for the machine, the AI could provide you what you ask for by the most natural interface, the human body. Not just at your finger tips, literally at your brain communication level.

Not only that but we are seeing now the developments of robotics with Boston Dynamics, self driving cars with Tesla and a whole host of new breakthroughs with Neura Link where the interface is actually embedded in your brain (isn’t that crazy???).